SpAML: Spoofing Users In Azure AD With SAML Claims Transformations¶

For those that believe SAML is dead, they should take a look at the Azure AD Application Gallery. While the authentication standard finished baking almost two decades ago, it’s still a staple for integration of applications with Azure AD. As of writing, the Azure AD App Gallery has 2015 applications available for SSO integration, and 1335 of them use SAML, roughly two-thirds. Beyond this, there are thousands of line-of-business (LOB) and other 3rd party applications that don’t exist in the gallery but leverage SAML. Many well-known applications and platforms are published in the gallery using SAML – Salesforce, Google Workspace/Google Cloud, AWS, SAP, Oracle Cloud, ServiceNow, Workday… the list goes on and on.

With the ease of federated identity, not only are we tying other cloud providers to Azure AD, but we are also bringing in business critical applications. With this, we need to keep in mind that threat actors are not always targeting critical IT resources; one can do plenty damage with access to records and data stored within SaaS applications.

Azure AD makes it easier than ever to spoof (impersonate) a user within a SAML response. This relatively easy technique allows us to impersonate a highly privileged user in the target application. At the same time, authentication behavior for all other users of the application, including the legitimate user being spoofed, will not change. If your organization leverages SCIM for user provisioning, which is the direction the industry is going, this lends in favor of spoofing going undetected, as optional attributes in the SAML response will go unused by the application.

Within Azure AD, you do not need to be a Global Administrator to configure an Enterprise Application. Anyone with Application Administrator, Cloud Application Administrator, or assigned Owner to a specific application can manage it. From a usability perspective, Microsoft pushes delegation of ownership to the business units. The users, however, that now can manage the application, likely fall under the bar of PIM or any privilege separation. Suffice to say, this opens our potential pool of targets in an organization; needing Global Administrator for movement into target applications is not necessary, just whoever has ownership. Likewise, it’s ripe for abuse by insider threats.

Azure AD SAML response primer¶

For a full refresh on the SAML authentication flow, I’ll refer to the IDPro Body of Knowledge Article, Cloud Service Authenticates Via Delegation – SAML. For an understanding of the spoofing technique, the SAML response, which is passed from the Identity Provider (IdP, Azure AD) to the application (SP, Service Provider) via the user, contains both required and optional claims. One of these required claims is the name identifier, referred to in the response as the NameID.

NameID is a unique attribute that represents the user within the application. It is what the application uses to lookup the user within its internal user database.

Azure AD defaults to, and it is a common pattern these days, to use the Email Address (urn:oasis:names

As an example, I authenticate to Azure AD as nestor.wilke@fabrikam.cloud, and subsequently when I connect to an Enterprise Application, say AWS, the SAML response contains the NameID below. On the identity layer, AWS uses this to match Nestor to his user account.

<NameID Format="urn:oasis:names:tc:SAML:1.1:nameid-format:emailAddress">nestor.wilke@fabrikam.cloud</NameID>

Azure AD also provides the ability to create claim transformations. Claim transformations can be useful for several reasons. A simple example is if the application has case sensitivity requirements. One can apply a ToLowercase() and not have worry about needing to modify broader patterns in Azure AD to satisfy one application. Claims transformations are configured on a per-claim basis within each Enterprise Application that is using SAML.

Setting the stage¶

An effective use of spoofing the SAML response is to impersonate a highly privileged user in an application. For our example, let’s look at a few different user personas, in a somewhat simplified scenario:

- Nestor Wilke is an account owner in AWS, and because this is considered a highly privileged role, he has privileged credentials he uses for accessing AWS, p-nestor.wilke@fabrikam.cloud.

- Isaiah Langer is part of the Operations team that is tasked with providing support to Enterprise Applications, which provides rights over the AWS Enterprise Application. Groups are used for user assignment to AWS, and AWS role assignment happens in AWS IAM Identity Center. Isaiah can manage these applications with his normal user account, isaiah.langer@fabrikam.cloud. He has an account in AWS, but his privileges there are low.

Configuring our malicious transform¶

Once we gain access to an account with ownership over the application, we will want to jump into Azure AD, and browse to Enterprise Applications, and then open the target application.

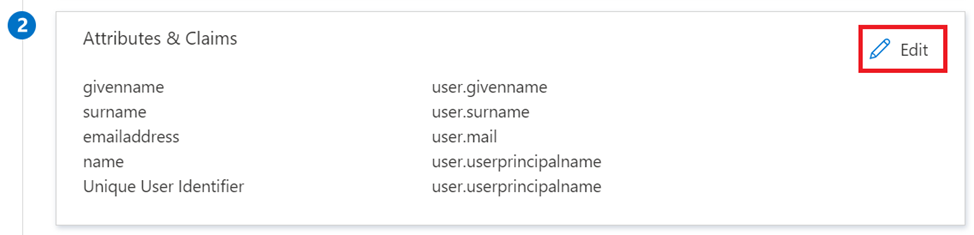

Within here, we will select Single sign-on in the left blade, and then under Attributes & Claims on the right blade, select Edit:

In the new blade that opens, we want to select the Unique User Identifier (Name ID) claim:

Note that in our example we will be using a transform on user.userprincipalname, but this same technique can apply to any attribute that is being used for NameID.

Within the Manage claim window, we want to select Transformation under Source. This will open a new Manage transformation blade on the right:

For spoofing, we need to know just two things – the UPN of the user we want to spoof, and the UPN of the user who will be used for performing the spoofing.

Under Transformation, select Contains(). For Parameter 1 (Input) select user.userprincipalname. For Value, we will enter isaiah.langer@fabrikam.cloud. For Parameter 2 (Output), do not select an attribute from the dropdown menu. Instead, click into the window within the menu and enter p-nestor.wilke@fabrikam.cloud.

We then check the box for Specify output if no match, and for Parameter 3 (Output if no match) select user.userprincipalname.

This last piece is what ensures that all other users accessing AWS will continue to have the same user experience, including Nestor. Our final transform will look like:

And we can confirm the pattern in the claims rule:

If 'user.userprincipalname' contains 'isaiah.langer@fabrikam.cloud' then output 'p-nestor.wilke@fabrikam.cloud'. Else, output 'user.userprincipalname'.

We select Add, and then Save.

Using our malicious transform¶

Now that we have things configured, let’s look at this in action. Recall that Nestor is our target user, and Isaiah is the account that will be used for spoofing.

This is just one example. This has been similarly tested and proofed with GCP and Salesforce and should be valid for any SAML application whether from the application gallery or a LOB application.

Detecting malicious transforms¶

Unfortunately, the ability to detect a transform like this is difficult, as normal channels do not expose the data we expect and need.

Audit logging is broken¶

The first thought is to go to our Azure AD audit logs. After all, the change should be recorded in Azure AD, so we can ingest that data for detection.

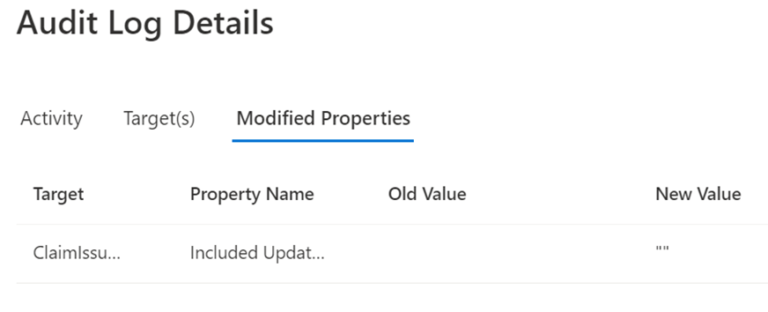

SAML claim policy auditing, is unfortunately, broken. If we look at our audit logs after Isaiah made the change, we will see the following:

We can drill into the Target(s) and see that there was a change made to a ClaimIssuancePolicy:

But when we look at the Modified Properties:

Note that there is nothing of value. Any SAML claim issuance policy changes in Azure AD show up the same way. To ensure this was not some sort of limitation of the portal itself, I looked at the data exported to Log Analytics:

I’ve had a support case open with Microsoft through standard channels on this, but there has been no movement.

Graph API does not expose SAML claim policies¶

Figure the next place we may turn would be the Microsoft Graph API, but again we are blocked here. The Graph API does not expose SAML claim issuance policies, something that can be traced back to 2019 with customers asking for it, yet still is not supported from my recent check with Microsoft.

Note

Microsoft has claimed to resolve this by making claim transforms available through Graph API, however, it's a different set of transforms.

What are we left with?¶

As Azure AD does not provide native means for easily tracking this, we can either go with something of relatively low fidelity, alerting on all changes to a ClaimIssuancePolicy, but this is also going to alert on all changes, good and bad. If there is little in the way of activity with SAML application onboarding in the environment, this may suffice.

From a retroactive analysis perspective, because these are not exposed through the Graph API, the only way to verify the policy settings is through manual inspection.

Using a SIEM platform for authentication log correlation may at first seem appealing but consider that authentication times are likely the only mechanism for correlation. If the platform has a high volume of user activity, that is going to create an immense level of complexity, as Azure AD and the application are highly likely going to be exposing the time the user authenticated into those systems, which may not align to the necessary precision.

Preventing malicious transforms¶

Preventing malicious transforms becomes even more important when our ability to properly monitor for them is inhibited.

From a prevention perspective it’s back to the basics. The following should apply for all Global Administrators, as well as Application Administrator or Cloud Application Administrator roles if your organization heavily relies on them for management of Enterprise Applications:

- Privilege separated from daily driver accounts

- Accounts should not be mail-enabled

- Cloud-sourced and not synchronized from Active Directory

- Azure AD PIM should be used with MFA requirement for elevation

- Roles scoped with a Conditional Access policy requiring MFA for Azure management

- Passwordless should be enabled and required for these accounts

- Accounts should be scoped for Azure AD Identity Protection

- Require a Privileged Access Workstation (PAW) for highly privileged operations

If you heavily delegate the owner role for specific Enterprise Applications, you may want to re-evaluate how you are securing the users with delegated access. While it’s unlikely businesses will accept the model of privilege separation or a PAM implementation for the HR administrator team that owns Workday, it may be a place to reconsider if those delegations are necessary. If delegation primarily exists to assign membership to the application, the model could be changed to instead provide delegated ownership over the groups that are already assigned, or leverage entitlement management for application access lifecycle.

The surface area for abuse by a threat actor can be reduced by application conditional access requiring compliant devices for all users with proper endpoint management in place, but from the perspective of insider threat or certain token theft scenarios, this may not be sufficient.

Heavily restricted Conditional Access policies will not prevent user impersonation, as all CA policies are evaluated prior to the issuance of the SAML response. In our example, even if Nestor was restricted to using a PAW for accessing AWS, Isaiah would have been able to access AWS under whatever conditions CA was applying to his user account. If the application requires user assignment, or if a CA policy specifically blocked Isaiah from access, the risk is mitigated. For many business-critical applications, such as Workday, it’s highly likely that blocking scenarios cannot be applied as the application is used across the workforce. Using mechanisms such as role assignment may be circumvented with a combination of claim conditions and transforms.

If the target application provides mechanisms for privilege escalation management within, it may also stop the ability for impersonation, as the user would be presented to elevate privilege for the spoofed user within the target.

Responsible disclosure¶

When I initially came up with my proof case, I opened a case with MSRC, indicating that claims transforms should not allow for a constant output for NameID, as it enables for spoofing. Even though it is not a security risk within Azure AD, as the identity provider, proper controls should exist within the IdP to ensure that associated applications are not overly exposed to a security risk such as NameID spoofing.

- August 30th, 2022: Opened case with MSRC

- October 10th, 2022: Response from MSRC with case closed, “We determined that this behavior is considered to be by design.”

Likewise, a support case with Microsoft has been opened regarding deficiencies with SAML claim audit logging.

- October 20th, 2022: Opened support Sev C case with Microsoft

- October 26th, 2022: First response from SE with inaccurate understanding of case

- October 27th, 2022: Reply to SE with additional clarity

- November 8th, 2022: SE calls at a random time, follows up with email that a session will be scheduled on November 14th, at 9:30am EST

- November 14th, 2022: SE does not call at 9:30am EST

- November 14th, 2022: SE calls at 12:30pm EST, I request a call back at 1:00pm EST, SE agrees to call back at 1:00pm EST

- November 14th, 2022: SE does not call at 1:00pm EST

- November 17th, 2022: SE emails and schedules a call for November 18th, 2022 at 10:00am EST

- November 18th, 2022: SE does not call at 10:00am EST

- November 21st, 2022: SE emails indicating that I did not answer my phone at indeterminate time. Check with phone carrier, no call was ever received.

- November 28th, 2022: SE calls me outside of working hours.

- November 28th, 2022: I reply to an email with times that the SE can reach me

- December 9th, 2022: SE calls, able to finally have the screen sharing session. Walk the SE through the details I provided in my case showing the behavior of these changes not being properly audited. SE indicates that the case needs to be escalated.

- December 14th, 2022: SE calls me to let me know the case is still being investigated.

- January 9th, 2023: SE contacts me to let me know I need to reproduce the issue for fresh data for MS.

- January 25th, 2023: SE emails me to let me know the case is still being investigated.

- February 16th, 2023: SE emails me to let me know the case is still being investigated.

- February 20th, 2023: SE contacts me to let me know I need to reproduce the issue for fresh data for MS.

- March 15th, 2023: Case is re-assigned to an “Azure Support Ambassador”. It’s indicated that the case is still being investigated.

- March 17th, 2023: I email the Support Ambassador asking if it would be possible to find out more details about the case directly from engineering.

- March 27th, 2023: Support Ambassador emails me to let me know that a bug has been filed to be fixed at a later date.

- March 29th, 2023: Support closes the case without asking if it’s alright to do so.